Jean-Loup Guillaume, Matthieu Latapy and Damien Magoni

Computer Networks 50, pages 3197-3224, 2006. Extended abstract published in the proceedings of the 24-th IEEE international conference Infocom’05, 2005, Miami, USA

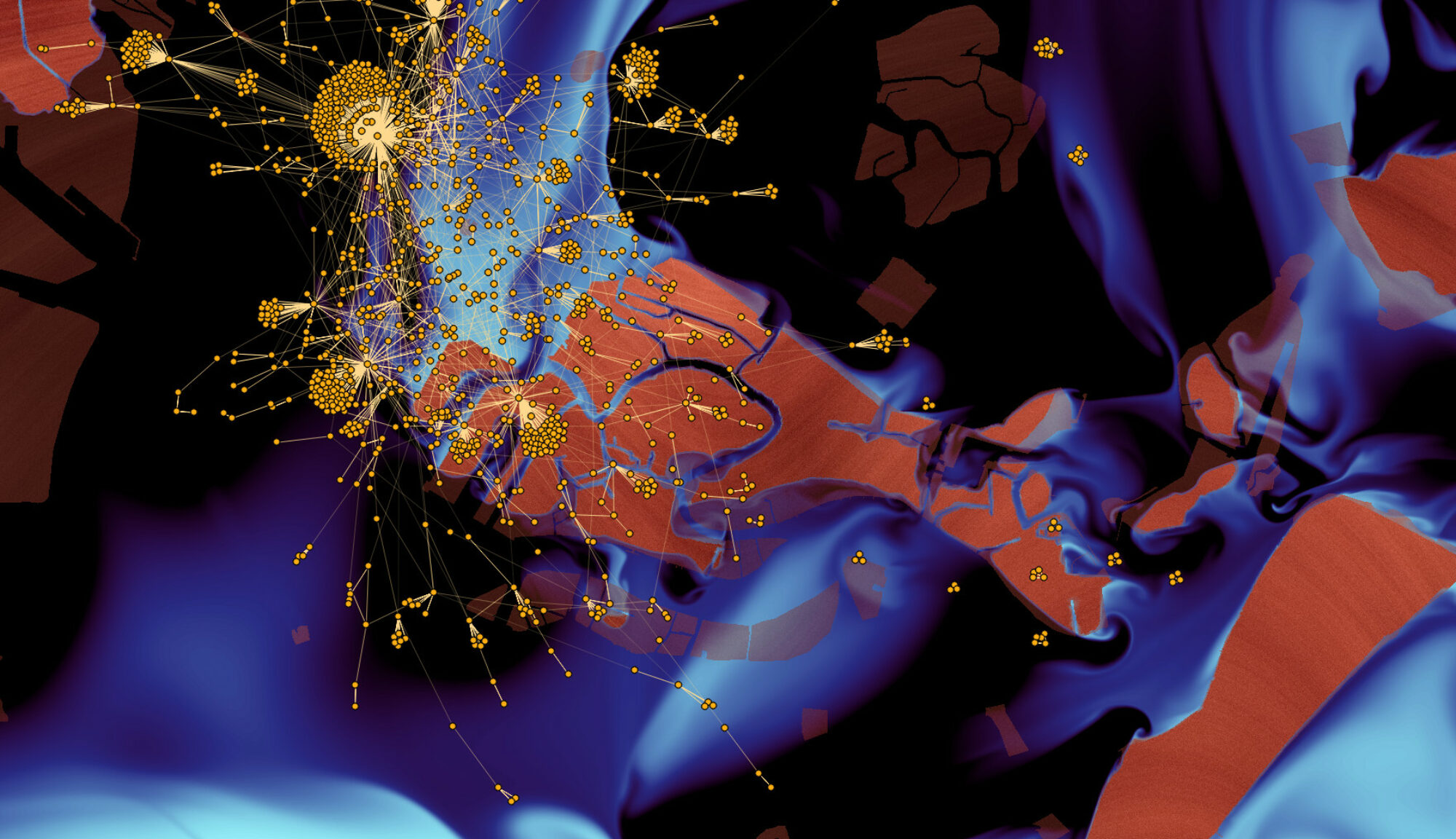

Internet maps are generally constructed using the traceroute tool from a few sources to many destinations. It appeared recently that this exploration process gives a partial and biased view of the real topology, which leads to the idea of increasing the number of sources to improve the quality of the maps. In this paper, we present a set of experiments we have conducted to evaluate the relevance of this approach. It appears that the statistical properties of the underlying network have a strong influence on the quality of the obtained maps, which can be improved using massively distributed explorations. Conversely, some statistical properties are very robust, and so the known values for the Internet may be considered as reliable. We validate our analysis using real-world data and experiments, and we discuss its implications.